Available with Image Analyst license.

Use the Classify Objects Using Deep Learning tool to classify objects in imagery. The tool runs a trained deep learning model on an input raster, and each input feature has an assigned class label. The image below is an example of object classification using deep learning tools.

A typical object classification workflow using deep learning consists of three main steps:

- Create and export training samples. Create training samples using the Label Objects for Deep Learning pane, and use the Export Training Data For Deep Learning tool to convert the samples to deep learning training data.

- Train the deep learning model. Use the Train Deep Learning Model tool to train a model using the training samples you created in the previous step.

- Perform inferencing. Use the Classify Objects Using Deep Learning tool. You will use the model you created in step 2.

For more examples, supported metadata formats, and model type architectures, see Deep learning model architectures.

Create and export training samples

Create a training schema and training samples, and export the training data.

If you have existing training samples in a raster dataset or a feature class, you can use the Export Training Data For Deep Learning tool and proceed to the Train a deep learning model section below.

- Create a training schema.

- Add the image to be used to generate the training samples to a map.

- In the Contents pane, select the image that you added.

- Click the Imagery tab.

- Click Deep Learning Tools, and click Label Objects for Deep Learning.

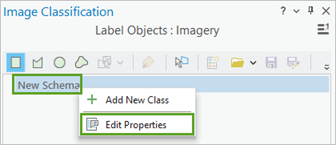

The Image Classification pane appears with a blank schema.

- In the Image Classification pane, right-click New Schema and click Edit Properties.

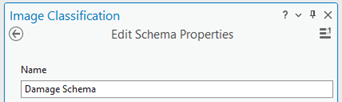

- Provide a name for the schema.

- Click Save.

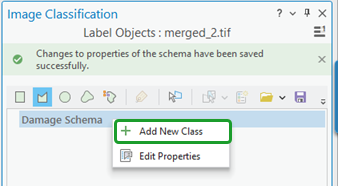

- Add a new class to the schema.

- Right-click the schema you created, and choose Add New Class.

- Provide a name for the class.

- Provide a value for the class.

The value cannot be 0.

- Optionally, choose a color for the class.

- Click OK.

The class is added to the schema in the Image Classification pane.

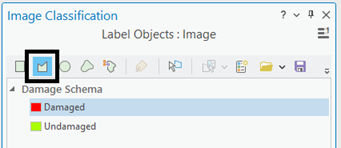

- Optionally, repeat steps 2a through 2e to add more classes.

- Right-click the schema you created, and choose Add New Class.

- Create training samples.

- In the Image Classification pane, select the class you want to create a training sample for.

- Choose a drawing tool, such as Polygon.

- Draw a polygon around the pixels that you want to classify.

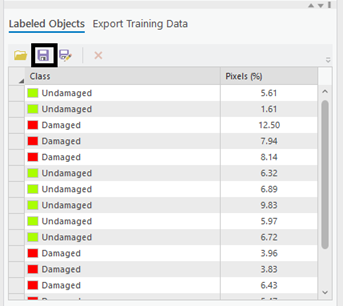

A new record is added to the Labeled Objects group in the Image Classification pane.

- Repeat steps 3a through 3c to create training samples for all the classes.

Since the final model will take into account the size of the objects you identify, you can select objects of various sizes.

- When you finish creating samples, click Save in the Image Classification pane.

- In the Save current training sample window, browse to the geodatabase.

- Provide a name for the feature class, and click Save.

Before you can train the model, you must export the training samples as image chips. An image chip is a small image that contains one or more objects to be detected. An image chip is created and labeled for each training sample for the classes in the schema.

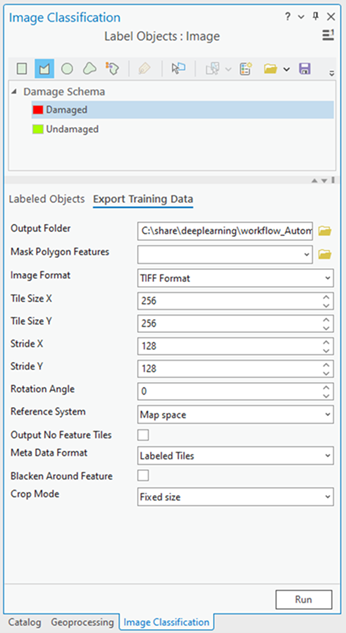

- In the Image Classification pane, click the Export Training Data tab.

- Provide the Output Folder value.

This is the path and name of the folder where the output image chips and metadata will be stored.

- Optionally, choose the Meta Data

Format value.

The metadata formats supported for the feature classifier model type are Labeled Tiles, Imagenet, and Mutlilabeled Tiles.

- Click Run to export the training data.

- Provide the Output Folder value.

Train a deep learning model

The Train Deep Learning Model tool uses the labeled image chips to determine the combinations of pixels in each image to represent the object. You will use these training samples to train a deep learning model. In the tool, only the Input Training Data and Output Model parameters are required.

Since the input training data is based on the Metadata Format value, an appropriate Model Type default value is determined. For example, if you specified the Labeled Tiles metadata format in the export process, the Feature Classifier option is specified for the Model Type parameter. The Model Type drop-down list is also updated with the model types that support the Labeled Tiles metadata format. The Batch Size, Model Arguments, and Backbone Model parameters are also populated based on the Model Type parameter value.

- Open the Train Deep Learning Model tool.

- For the Input Training Data parameter, browse to and select the training data folder where the image chips are stored.

- For the Output Model parameter, provide the file path and name of the folder where the output model will be saved after training.

- Optionally, specify a value for the Max Epochs parameter.

An epoch is a full cycle through the training dataset. During each epoch, the training dataset you stored in the image chips folder is passed forward and backward through the neural network one time. Generally, 20 to 50 epochs are used for initial review. The default value is 20. If the model can be further improved, you can retrain it using the same tool.

- Ensure that the Feature Classifier (Object classification) value is selected in the Model Type parameter drop-down list.

The model type determines the deep learning algorithm and neural network that will be used to train the model, such as the Feature Classifier model architecture. For more information about models, supported metadata, and model architecture details, see Deep learning model architectures and Deep Learning Models in ArcGIS .

- Optionally, change the Model Arguments parameter value.

The Model Arguments parameter is populated with information from the model definition. These arguments vary depending on the model architecture that was specified. A list of model arguments supported by the tool are available in the Model Arguments parameter.

- Optionally, set the Batch Size parameter value.

This parameter determines the number of training samples that will be trained at a time. A batch size value can be determined by various factors such as number of image chips, GPU memory (if GPU is used), and learning rate, if a custom value is used. Typically, the default batch size produces good results.

- Optionally, provide the Learning Rate parameter value.

If no value is provided, the optimal learning rate is extracted from the learning curve during the training process.

- Optionally, specify the Backbone Model parameter value.

The default value is based on the model architecture. You can change the default backbone model using the drop-down list.

- Optionally, provide the Pre-trained Model parameter value.

A pretrained model with similar classes can be fine-tuned to fit the new model. The pretrained model must have been trained with the same model type and backbone model that will be used to train the new model.

- Optionally, change the Validation % parameter value.

This is the percentage of training samples that will be used to validate the model. This value depends on various factors such as the number of training samples and the model architecture. Generally, with a small amount of training data, 10 percent to 20 percent is appropriate for validation. If there is a large amount of training data, such as several thousand samples, a lower percentage such as 2 percent to 5 percent of the data is appropriate for validation. The default value is 10.

- Optionally, check the Stop when model stops improving parameter.

When checked, the model training stops when the model is no longer improving regardless of the Max Epochs value specified. The default is checked.

- Optionally, check the Freeze Model parameter.

This parameter specifies whether the backbone layers in the pretrained model will be frozen, so that the weights and biases remain as originally designed. If you check this parameter, the backbone layers are frozen, and the predefined weights and biases are not altered in the Backbone Model parameter. If you uncheck this option, the backbone layers are not frozen, and the weights and biases of the Backbone Model parameter value can be altered to fit the training samples. This takes more time to process but typically produces better results. The default is checked.

- Click Run to start the training.

Perform inferencing

Inferencing is the process in which information learned during the deep learning training process is used to assign classes or category labels to each of the detected objects in the input image. Use the Classify Objects Using Deep Learning tool since you are performing an object classification workflow.

- Open the Classify Objects Using Deep Learning tool.

- For the Input Raster parameter, browse to and select the input raster data you want to classify.

The input can be a single raster dataset, multiple rasters in a mosaic dataset or an image service, a folder of images, or a feature class with image attachments.

- For the Output Classified Objects Feature Class parameter, provide a name for the output feature class.

The output feature class will contain geometries surrounding the detected objects in the input image, as well as a field to store the categorization label.

- Specify the Model Definition value (*.emd or *.dlpk file).

This is the model file that contains the training output. This is the output of the train deep learning section.

- For the Arguments parameter, accept the default values or modify them.

The Arguments parameter is populated with information from the Model Definition parameter. These arguments vary depending on the model architecture that was specified. In this example, the Feature Classifier model architecture is used, so the following arguments are populated.

- batch_size—The number of image tiles processed in each step of the model inference. This value depends on the memory size of the graphics card.

- test_time_augmentation—Test time augmentation is performed while predicting. If true, the predictions of flipped and rotated variants of the input image are merged into the final output.

- Click Run to start the inferencing.